Enterprise software teams are encountering a scenario that would have been unthinkable three years ago: customers sign contracts for millions of AI tokens per month, yet a single power user can consume that entire allocation in days. By the time traditional billing systems detect the overage, the cost has already been incurred.

This is not just a billing problem. It is a governance problem, and it requires a real-time usage control layer that most SaaS stacks were never designed to support. And nearly every company shipping AI features is about to face it.

The Problem: Billing Systems Weren’t Built for Real-Time Enforcement

Traditional SaaS billing operates on a simple premise: count usage, aggregate it, send an invoice. Whether tracking seats, API calls, or storage, the pattern is the same. Events are collected, batch processed, and invoiced monthly. This model worked for more than a decade of SaaS growth.

AI breaks this model.

When a single inference request can cost $0.50 or more for complex reasoning models, and a runaway automation can generate thousands of requests per minute, the gap between “usage happened” and “billing caught up” becomes a financial chasm. OpenAI’s inference costs alone represented 50% of its 2024 revenues. That is a company built around AI economics. For traditional SaaS companies layering AI into existing products, the exposure can be even sharper.

Product leaders increasingly want experiences similar to ChatGPT’s cool-down periods. When users hit their limit, they should see a message and be throttled, not surprised with a large invoice at the end of the month.

The challenge is structural. Most billing infrastructure was designed for post-facto reconciliation, not real-time enforcement. A Stripe integration is excellent at charging customers after they have consumed resources. It is not designed to prevent them from consuming resources they have not paid for.

Why This Is Happening Now

Three converging forces are making AI usage governance an urgent engineering priority.

First, the economics of inference are materially different from traditional software. Marginal cost does not approach zero. Every token processed consumes GPU compute, electricity, and often premium model access fees. Gartner predicts that by 2028, more than 50% of enterprises that built large AI models from scratch will abandon their efforts due to cost, complexity, and technical debt. For companies reselling AI capabilities through embedded features or APIs, this creates a fundamental revenue and cost alignment problem. If pricing does not closely track costs, and enforcement does not closely track pricing, margins erode quickly.

Second, enterprise customers increasingly demand self-service controls. Organizations want to allocate AI budgets to departments, teams, or individual users and have those allocations enforced automatically. They expect configurable alerts as usage approaches defined thresholds and hard stops when limits are reached. This is not only about preventing overage charges. It is about organizational governance. Enterprises need to forecast AI costs, distribute budgets across cost centers, and demonstrate ROI by business unit. Without granular usage controls, AI becomes an unpredictable line item that finance teams struggle to model and executives hesitate to scale.

According to Deloitte’s 2026 State of AI in the Enterprise report, worker access to AI rose by 50% in 2025 with continued growth expected. Yet only 29% of organizations report having comprehensive AI governance plans in place. Adoption is accelerating faster than control systems.

Third, the credit model is becoming standard, but implementation is complex. Hybrid pricing models that combine base subscriptions with usage-based credits have become widely adopted across SaaS. Credits provide predictability for customers while allowing flexible consumption and abstraction over tokens, models, and inference variability. Many teams are now converging on hybrid pricing models that combine subscriptions, entitlements, and usage-based credits to better align value and cost.

However, they introduce new operational challenges. Promotional credits may require different accounting treatment than paid credits. Enterprise customers often need to subdivide credit pools across subsidiaries or teams. Balances must be tracked in real time with sub-second accuracy. Rollover, expiration, and revenue recognition rules add further complexity. At scale, these details become infrastructure problems rather than billing edge cases.

What Good Looks Like: The Three Layers of AI Usage Governance

Across companies at different stages of AI monetization maturity, effective governance consistently rests on three layers.

The foundation is real-time metering. Usage must be measured immediately, with high accuracy and sufficient dimensional detail to support flexible policy decisions. This means sub-second event ingestion, tracking across relevant dimensions such as model, user, feature, and customer tier, and strong guarantees around idempotency and exactly-once semantics. Techniques such as token bucket algorithms, sliding window counters, and event sourcing are familiar patterns. What has changed is that metering now sits on the critical path of product experience rather than in a background analytics pipeline.

The second layer is policy enforcement. Metering records what happened. Enforcement determines what is allowed to happen next. This requires synchronous checks before inference execution, support for hierarchical limits across users, teams, and organizations, and configurable responses when thresholds are reached. Some customers require hard blocks, others prefer throttling or degraded model quality. Many want soft limits with alerts before enforcement occurs. Architecturally, teams typically combine edge-level rate limiting for abuse prevention with application-level quota checks for business policy enforcement.

The third layer is self-service administration. This is often the most underinvested component. Enterprise customers expect to manage their own governance policies without filing support tickets. They want administrative interfaces for budget allocation, dashboards that show burn rate and projected usage, configurable alerts, and audit logs that capture policy changes over time. At this layer, governance stops being back-office infrastructure and becomes an organizational control plane embedded in the product itself.

The Contrarian Take: Governance Is a Feature, Not a Tax

Many companies treat usage governance as a margin protection mechanism. It is funded from finance, owned by billing teams, and optimized for adequacy.

This framing limits its strategic value.

The companies gaining traction in enterprise AI treat governance as customer value creation. Granular AI controls allow organizations to run controlled experiments, measure ROI by department, differentiate access based on risk profiles, and forecast future spend with confidence. When customers trust the guardrails, they scale usage more aggressively, not less.

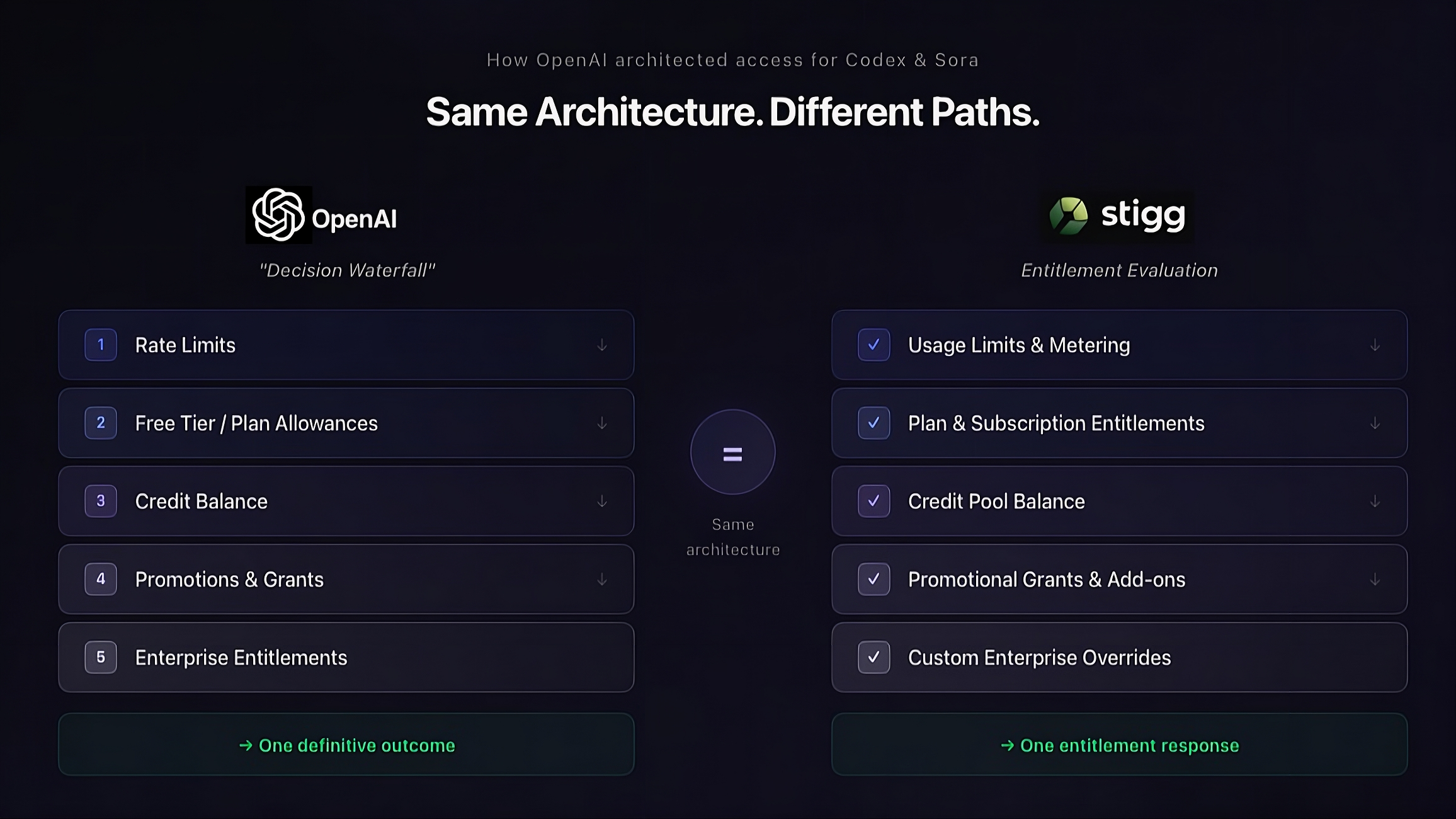

Some AI infrastructure providers are now building governance capabilities that their customers can embed into their own products. Governance is evolving into a platform feature rather than a constraint layered on top of billing. This shift mirrors the broader evolution from static plans to programmable entitlements and advanced metering across modern SaaS platforms.

The Takeaway

If you are shipping AI features in 2026, governance cannot be an afterthought. It must be designed alongside pricing and product architecture from the beginning.

Winning companies are building three capabilities in parallel: real-time metering at millisecond granularity, synchronous policy enforcement for blocking and throttling, and self-service administration that turns governance into differentiation.

The shift from seats to tokens is not simply a pricing adjustment. It forces a rethinking of how software companies align value, cost, and control.

The billing systems built for traditional SaaS will not be sufficient. The governance systems built for AI may become your most durable competitive advantage.

If you are rethinking your pricing architecture for AI, governance needs to sit on the critical path. Check out our docs or talk with our team to discuss how to implement real-time metering and enforcement without slowing down your inference pipeline.